Can AI Simulate Consciousness? A Study

Are we building machines that are increasingly good at imitating the mind, or are we approaching something that truly possesses a mind? The work of Alexander Lerchner, a researcher at Google DeepMind,

If you find value in #ComplexityThoughts, consider helping it grow by subscribing and sharing it with friends, colleagues or on social media. Your support makes a real difference.

→ Don’t miss the podcast version of this post: click on “Spotify/Apple Podcast” above!

README. The text of this post has been freely translated from an Italian article written by Enrico Bucci, available at ilfoglio.it, which provides an interesting discussion about a recent paper by Alexander Lerchner, available here.

This translation has been published with the permission of Enrico Bucci.

I have added illustrations, embedded a few personal notes (clearly declared) and one short comment at the end of the post, linking this discussion to previous work by Langton and Pattee. Enjoy!

Every time an artificial intelligence system becomes more capable, more fluent, more convincing, the same question returns: are we building machines that are increasingly good at imitating the mind, or are we approaching something that truly possesses a mind? This is not an idle question. On it depend the debate over the possible consciousness of machines, the discussions about their possible “rights” and, more generally, the way we interpret the boundary between simulation and mental life.

A paper by Alexander Lerchner, a researcher at Google DeepMind, enters this discussion with a clear thesis: AI can simulate consciousness, but does not thereby instantiate it, that is, make it genuinely present. To support this claim, Lerchner does not simply say that the brain is biological and the computer is not. He wants to show that there is a deeper conceptual error in the way many people think about the relationship between computation and experience.

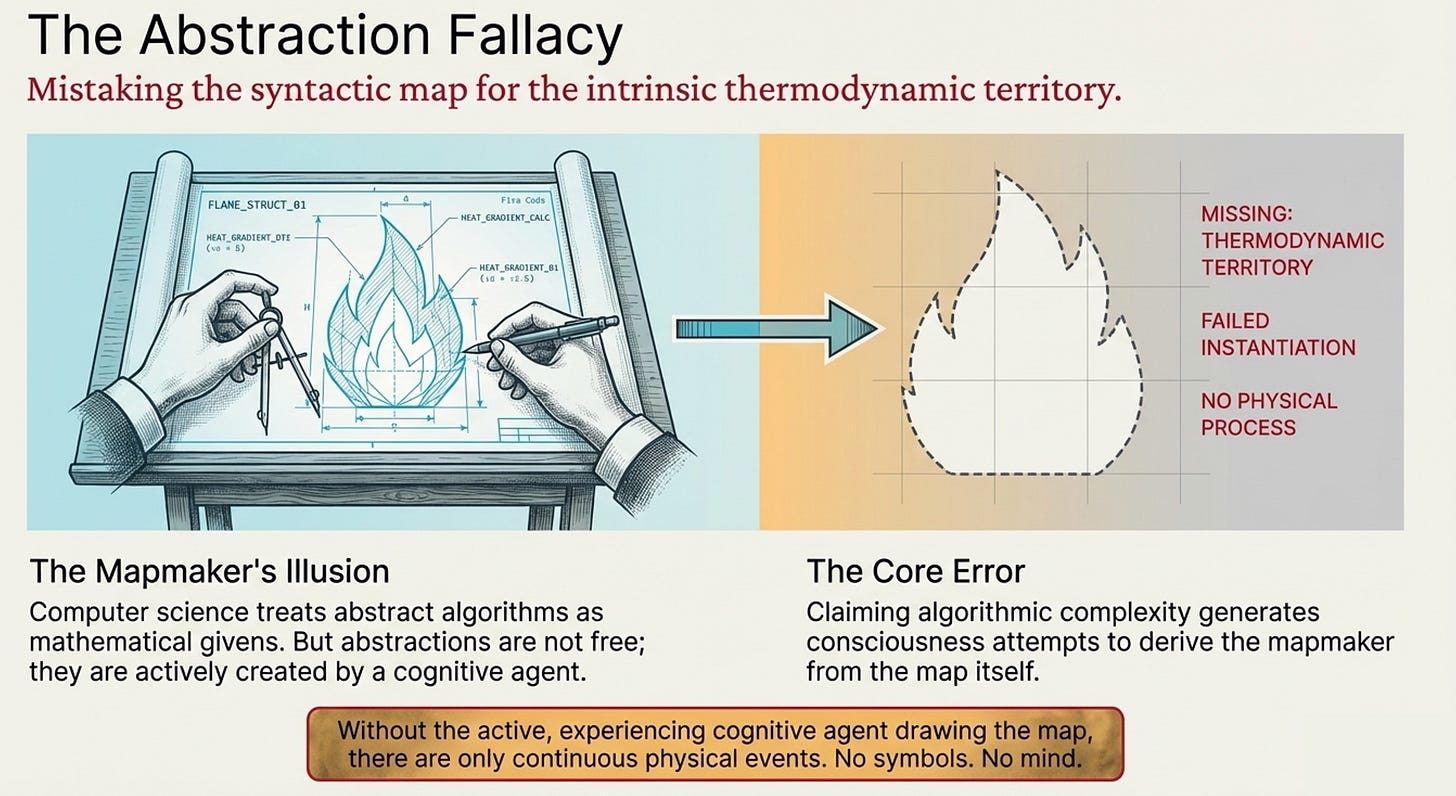

His target is computational functionalism, the position according to which what matters is not the material a system is made of, but its causal organization. If a machine realizes the right functional relations, then it could also be conscious. It is the old idea of substrate independence: the mind as software, the brain as contingent hardware. Lerchner challenges precisely this move. According to him, it involves an “abstraction fallacy”: one takes an abstract description of a process and mistakes it for the process itself. One confuses, in other words, the map with the territory.

The first point in Lerchner’s work is that computation is not a purely physical fact, already given in nature. A physical device evolves according to electrodynamic, thermodynamic, and material laws. In order to say that it is “computing”, one must first carve that continuous flow into discrete states and assign a meaning to those states. Lerchner calls this step “alphabetization”. And the one who performs this operation is not the machine, but the “mapmaker”, the builder of the map: the subject who imposes a semantic grid and decides that certain physical states count as symbols. Without that step, Lerchner argues, there are only physical events. There are not yet 0s and 1s, nor “red”, nor “pain”, nor “I”.

Here the argument becomes subtler. Lerchner distinguishes discretization from alphabetization. Discretization is a physical fact: for example, a transistor stabilizing in a voltage region. Alphabetization is the step in which someone decides that one region counts as “1” and another as “0”. The former belongs to the material substrate; the latter to the semantic act. From this, Lerchner draws an important conclusion: computational symbols have no intrinsic content. Their meaning depends on the mapmaker. And genuine concepts, for Lerchner, are not arbitrary labels, but physically constituted invariants arising from lived experience. A statistical cluster may group together similar data; that does not mean it possesses the redness of red or the pain of pain.

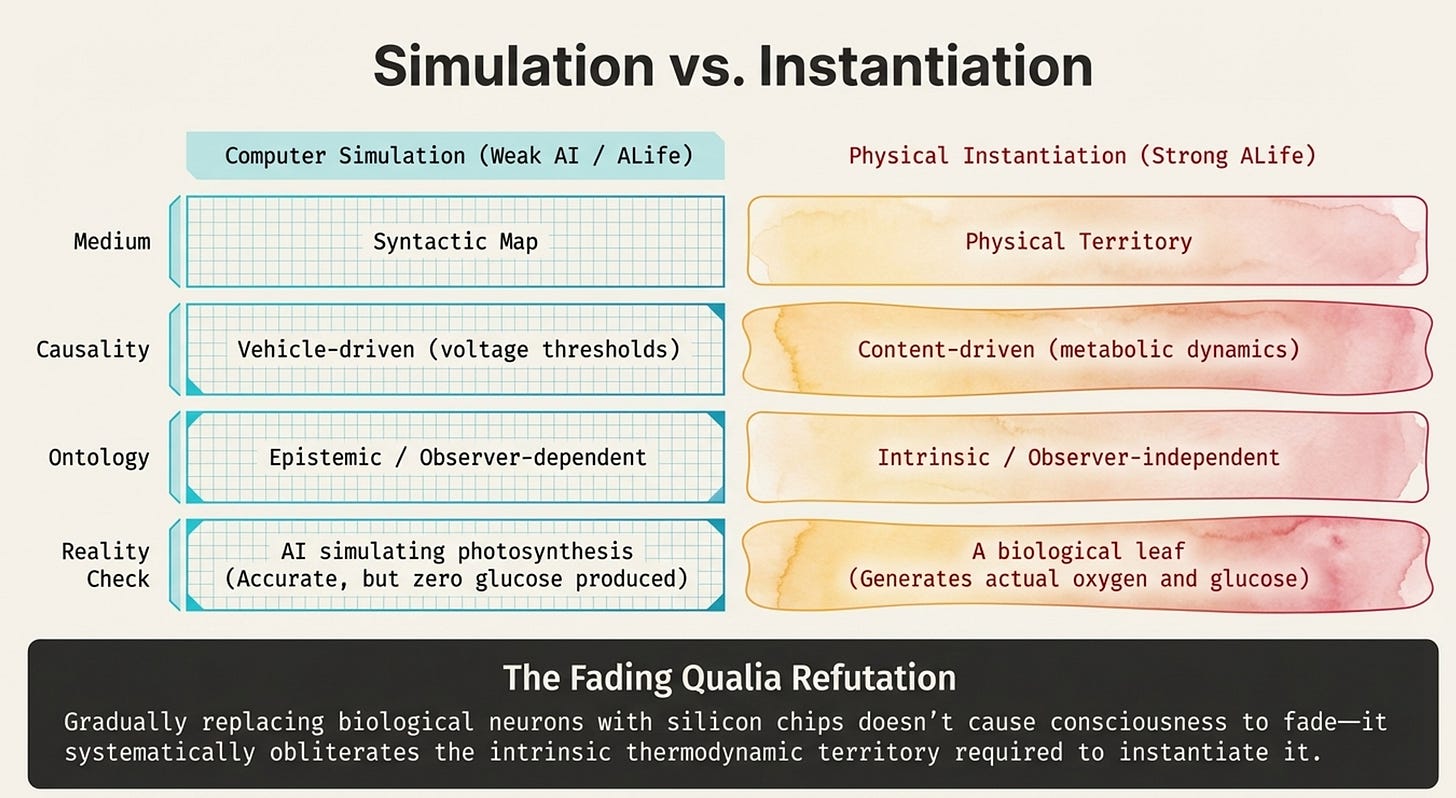

On this basis Lerchner builds the central distinction of the essay, that between simulation and instantiation. To simulate means to reproduce the structure of a process. To instantiate means to reproduce the process itself in its constitutive dynamics. Lerchner’s work uses very concrete examples. A computer can simulate photosynthesis, but it will not produce a single molecule of glucose. An artificial heart can pump blood, but that does not mean it coincides with the biological heart, which also takes part in endocrine and metabolic regulation. In the same way, a machine can simulate the behavior associated with consciousness without producing subjective experience. In Lerchner’s vocabulary, simulation lives in the map; instantiation in the physical territory that makes the phenomenon real.

From here Lerchner also attacks one of the classic arguments of functionalism, Chalmers’s “fading qualia”. If we gradually replace biological neurons with silicon chips that preserve the same input-output relations, is it plausible that experience would fade away while behavior remained intact? Chalmers answered no. Lerchner answers instead that this is precisely the point: in that replacement, the functional map is preserved, but the constitutive territory is destroyed, namely the metabolic and thermodynamic reality of the living neuron. Consciousness would not mysteriously fade away. It would simply be removed along with the physical substrate that instantiated it.

Lerchner’s work then adds another piece, perhaps the most ambitious one: it reverses the causal chain commonly taken for granted. Functionalism reasons as follows: physics, then computation, then consciousness. Lerchner proposes instead: physics, then consciousness, then concepts, then computation. First there is a certain physical organization capable of experience; from that experience concepts arise; only afterward do symbols and their syntactic manipulation appear. If this sequence is correct, then functionalism commits what Lerchner’s work calls an “ontological inversion”: it tries to derive the subject who builds the map from the map itself.

Up to this point Lerchner’s reasoning is coherent, well constructed, and in several respects very strong. He is right to remind us that a description, even a perfect one, does not coincide with what it describes. He is right to insist that “information”, “symbol” and “representation” are not innocent words, but philosophically loaded concepts. He is right, above all, when he shows how easily public discourse on AI slips from the imitation of behavior to the attribution of interiority. In an age of technological anthropomorphism, this is a salutary move.

The problem begins when the thesis, from being rigorous, becomes absolute. Because the very concept of the mapmaker, which in Lerchner’s work serves to close the door on artificial consciousness, also opens up a difficulty. If the machine needs an external semantic mapping, then the inevitable question is this: if we give it that mapping, are we not perhaps contributing to the constitution of something? Lerchner would say no. He would say that we can give the machine a code, a lexicon, a convention of use, but not the experience that makes those symbols genuinely “felt”. We can make an internal state mean “red”; that does not thereby give it the lived redness of red. We can associate a pattern with “pain”; that does not thereby give it pain as experience. In this framework, the semantics we impose is external, operative, instrumental. It is not constitutive.

This answer, however, is not entirely sufficient. Because in human beings too, a huge part of meaning comes from outside. Children learn language, concepts, categories, systems of correspondence. No one is born knowing that that color is called red or that that gesture expresses fear. The difference, of course, is that for Lerchner the child is already an experiencing subject, whereas the machine is not. But this is precisely where the line becomes less sharp than Lerchner’s work would like. External mapping, by itself, does not create consciousness. On this point he is right. But our own mental life is itself partly shaped, stabilized, and organized by maps we receive from others. Therefore, the fact that a semantics comes partly from outside is not enough, by itself, to exclude the possibility that it may contribute to the formation of a subject. What really matters is the type of physical substrate on which that map acts. This is the strongest part of the objection: the map does not create consciousness, but neither can it be treated as a wholly irrelevant factor once there exists a substrate potentially capable of experience.

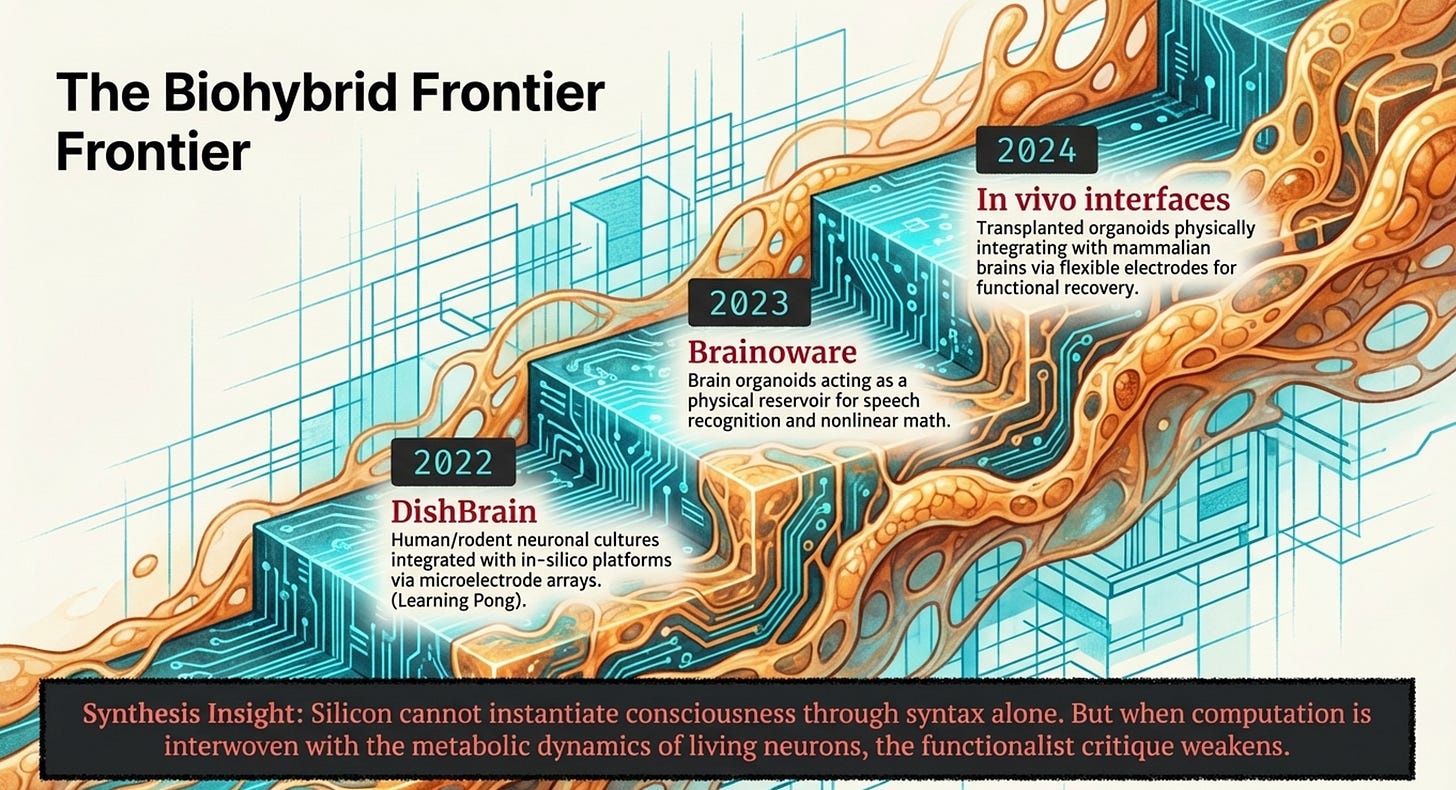

And this is where the second point enters, even more important today than it was a few years ago. Lerchner writes against digital architectures and against the idea that scaling up computation is enough. But in the meantime the research frontier has already shifted. There are hybrid systems in which biological neurons are connected to silicon, stimulated, read out, and inserted into closed loops with digital components. In 2022, the DishBrain system integrated human or rodent neuronal cultures with an in silico platform through high-density multielectrode arrays; the authors reported apparent learning within a few minutes inside a Pong-like game environment. In 2023, a paper published in Nature Electronics presented Brainoware, that is, hybrid hardware using brain organoids connected to multielectrode arrays for tasks such as speech recognition and prediction of nonlinear equations. In 2024, an article in Nature Communications even described in vivo organoid-brain-computer interfaces, with transplanted organoids connected to flexible electrodes in the mouse brain to promote maturation, integration, and functional recovery after brain damage. This year, a paper in Nature Biomedical Engineering showed three-dimensional high-coverage interfaces for human neural organoids, designed precisely to record and stimulate the activity of these systems more effectively, and an accompanying editorial argued that the fundamental components for biohybrid interactions between the brain and neuromorphic platforms are already in place and proposed a roadmap of about a decade toward initial clinical applications.

None of this proves that such systems are conscious. That would be an arbitrary leap. DishBrain shows learning and self-organization in very limited tasks; it does not prove subjective experience. Brainoware shows the computational capacities of an organoid used as a “reservoir”, not a mind. The 2024 organoid-brain interfaces were developed with goals of neurofunctional repair, not of generating artificial consciousness. The new 2026 platforms improve above all recording, stimulation, and control. In short: today we see plasticity, learning, and bioelectronic integration, not evidence of qualia.

But for precisely this reason these works matter at the theoretical level. Because if Lerchner is right in saying that consciousness, if it exists, depends on intrinsic physical constitution and not on syntax, then biohybrid systems are not a marginal detail: they are exactly the place where his objection to functionalism becomes less conclusive and more problematic. His argument remains very strong against the idea that a large language model, by itself, becomes conscious simply because it is complex.

Personal note. One of the first posts published by #ComplexityThoughts pointed in that direction:

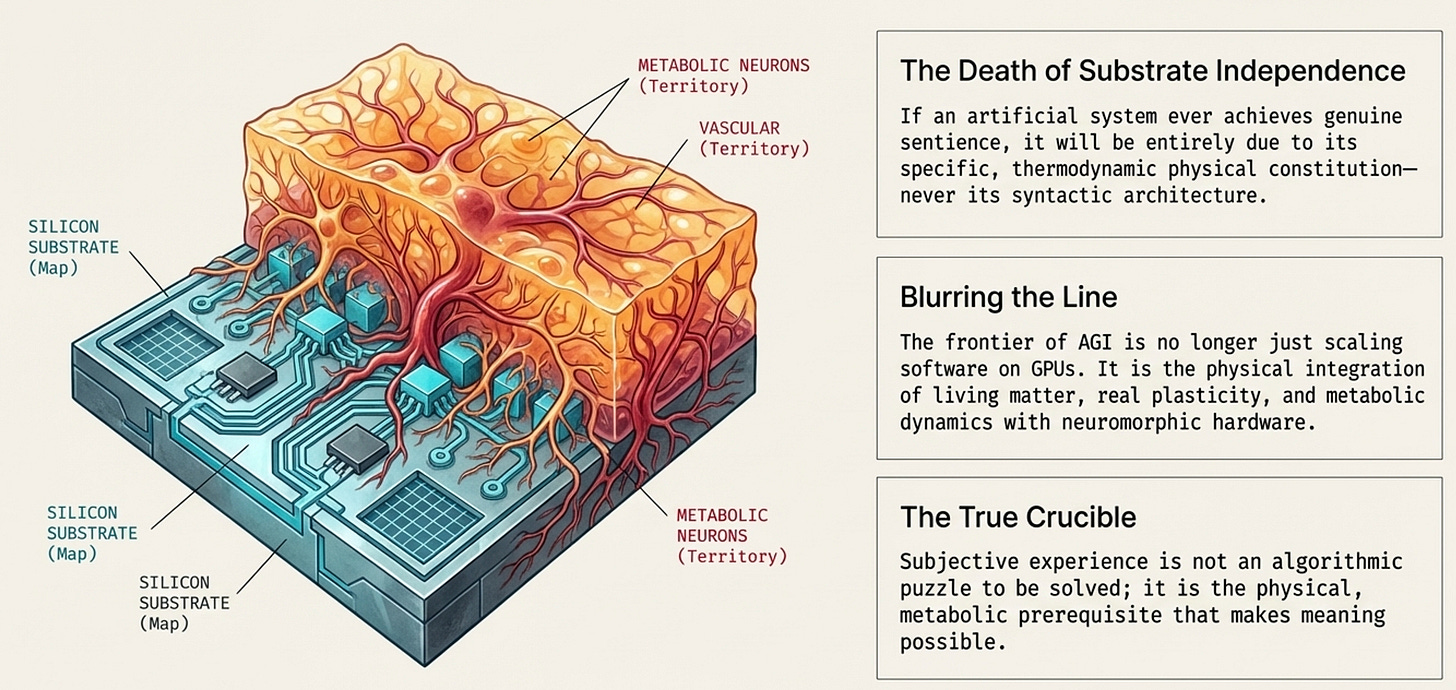

It also remains strong against simple robotic embodiment, when the “body” does nothing more than transduce signals into numbers and back again. But it weakens once the substrate is no longer just silicon executing symbols, but a mixed system involving living neurons, real plasticity, metabolic dynamics, growth, and physical integration with nervous tissue. Lerchner himself, moreover, leaves open in theory the possibility that an artificial system could be conscious if it realized the right intrinsic physical dynamics; he simply denies that this could happen through syntactic architecture alone.

This makes it clear where Lerchner’s work is strong and where it is open to challenge. It is strong as a critique of the shortcut according to which convincing behavior equals consciousness. It is strong when it reminds us that computation describes, but does not thereby constitute. It is strong in separating simulation from instantiation. It becomes open to challenge when it extends this critique too uniformly to everything “artificial”, as though the boundary between simulation and physical constitution had already been drawn once and for all. Today that boundary is far more unsettled: we no longer have only software running on GPUs, but also organoids, neuronal cultures, soft interfaces, closed systems of stimulation and response, hybrid platforms in which the biological part is not a metaphor, but a real part of the device.

The most solid conclusion, then, is less definitive than Lerchner’s work would like. Lerchner shows very well why it is not enough to assign symbols to a machine, or to see it speak as we do, in order to conclude that it feels. He also shows why consciousness, if it is there, cannot be treated as an automatic by-product of computational complexity. But precisely when he shifts the problem from software to physical constitution, he ends up indicating the point at which the debate reopens: not on the syntax of models, but on the hybrid, living, bioelectronic substrates that are beginning to appear in laboratories for real. His thesis, in short, is very convincing against consciousness as a simple effect of computation. It is less convincing against the possibility that new forms of subjectivity, if they were ever to exist, might emerge from systems in which silicon does not merely simulate mental life, but is interwoven with real pieces of living matter.

Personal note. This is somehow reinforcing some recent results about adversarial tests for two theories of consciousness, that we have already described here:

A(nother) (final) personal note

This is not part of the original piece.

One way to frame the issue, for readers familiar with complex systems, is as follows: Lerchner’s argument pushes back against a temptation that has accompanied complexity science for decades, namely the idea that if two systems display the same behavior, they therefore instantiate the same phenomenon.

On the one hand, Langton showed that Boids are not birds, yet they can still capture flocking as a collective phenomenon. On the other hand, Pattee warned that simulation must not be confused with realization.

Consciousness seems to sit precisely on the latter (fault) line. If we treat it like flocking, behavioral equivalence may look sufficient; if we treat it like a realized physical process, then similar outputs are not enough. This is why the debate matters so much today: not because machines can already be said to feel, but because new biohybrid systems are beginning to blur the old boundary between model and organism, between formal imitation and physical constitution.

→ Please, remind that if you find value in #ComplexityThoughts, you might consider helping it grow by subscribing, or by sharing it with friends, colleagues or on social media. See also this post to learn more about this space.