Complexity Thoughts: Issue #82

Unraveling complexity: building knowledge, one paper at a time

If you find value in #ComplexityThoughts, consider helping it grow by subscribing and sharing it with friends, colleagues or on social media. Your support makes a real difference.

→ Don’t miss the podcast version of this post: click on “Spotify/Apple Podcast” above!

Foundations of network science and complex systems

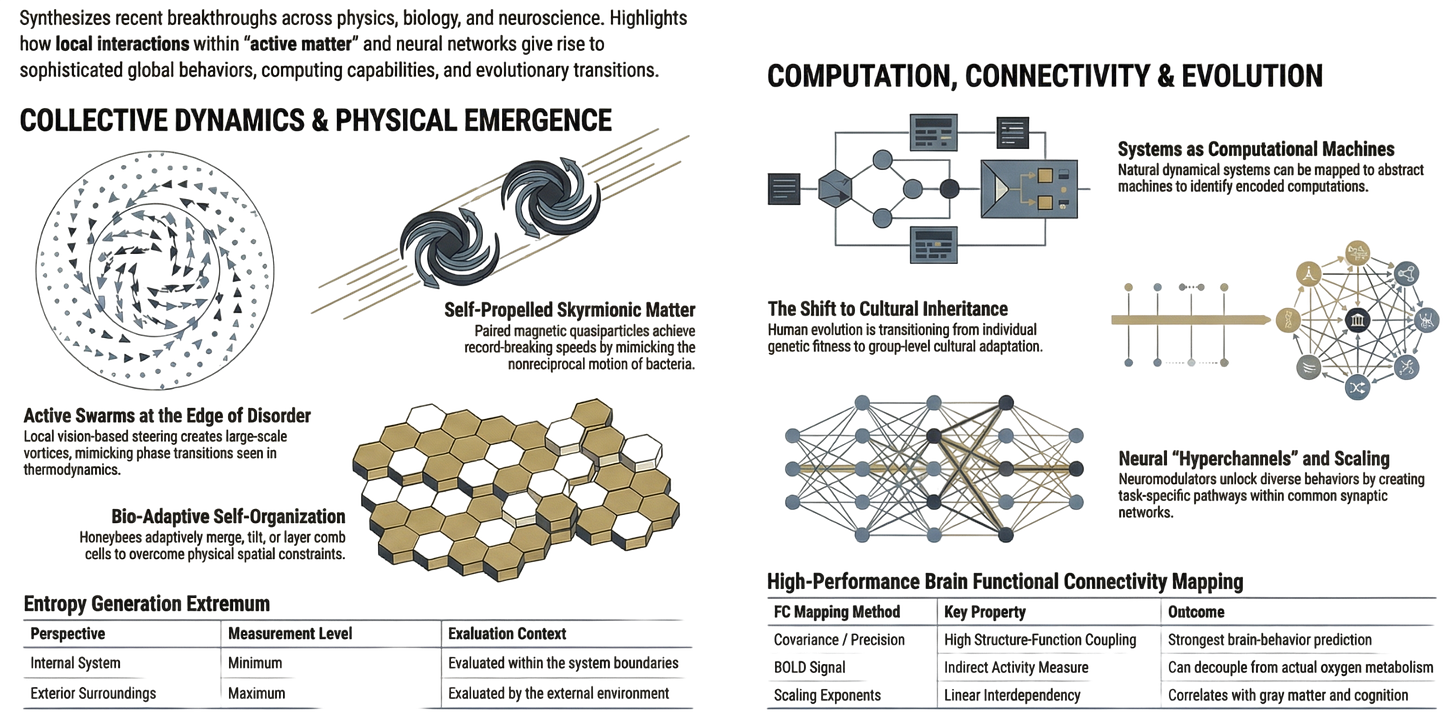

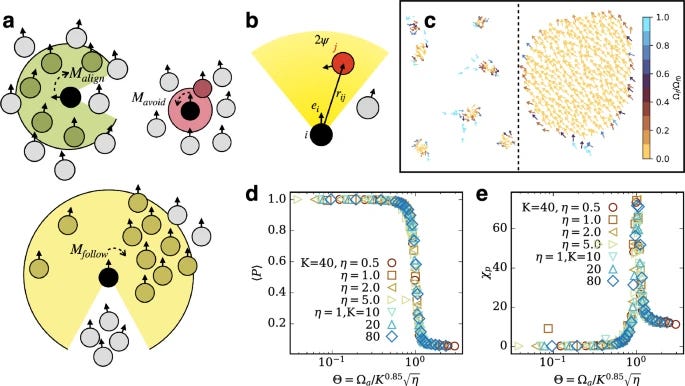

Dynamics of active swarms at the edge of disorder

Many animal groups form structures such as flocks and swarms. However, how can the individual agents reconcile the simultaneous requirements of local collision avoidance, alignment, and group cohesion to achieve coherent collective motion? Here, we propose a minimal flocking model, where each agent is capable of vision-based steering interactions to achieve these (conflicting) goals. Numerical simulations in two dimensions show that local collision avoidance acts as a source for emergent noise and induces an order-disorder transition, triggered by the fast response of the flock to local directional changes. The emergence of large vortices at the critical point hints at a Berezinskii-Kosterlitz-Thouless-like transition. Deep in the ordered phase, the cohesion acts like a surface tension, favouring compact flock shapes. The competing interactions lead to pronounced shape and density fluctuations of the flock. These large fluctuations can be important for a fast response to external cues, which aids predator evasion and foraging.

Emergent Self-Propulsion of Skyrmionic Matter in Synthetic Antiferromagnets

Read also this coverage.

Self-propulsion plays a crucial role in biological processes and nanorobotics, enabling small systems to move autonomously in noisy environments. Here, we theoretically demonstrate that a bound skyrmion-skyrmion pair in a synthetic antiferromagnetic bilayer can function as a self-propelled topological object, reaching speeds of up to a hundred million body lengths per second—far exceeding those of any known synthetic or biological self-propelled particles. The propulsion mechanism is triggered by the excitation of back-and-forth relative motion of the skyrmions, which generates nonreciprocal gyrotropic forces, driving the skyrmion pair in a direction perpendicular to their bond. Remarkably, thermal noise induces spontaneous reorientations of the pair and momentary reversals of the propulsion, mimicking behaviors observed in motile bacteria and microalgae.

What does it mean for a system to compute?

Many real-world dynamic systems, both natural and artificial, are understood to be performing computations. For artificial dynamic systems, explicitly designed to perform computation—such as digital computers—by construction, we can identify which aspects of the dynamic system match the input and output of the computation that it performs, as well as the aspects of the dynamic system that match the intermediate logical variables of that computation. In contrast, in many naturally occurring dynamic systems that we understand to be computers, even though we neither designed nor constructed them—such as the human brain—it is not a priori clear how to identify the computation we presume to be encoded in the dynamic system. Regardless of their origin, dynamic systems capable of computation can, in principle, be mapped onto corresponding abstract computational machines that perform the same operations. In this paper, we begin by surveying a wide range of dynamic systems whose computational properties have been studied. We then introduce a very broadly applicable framework for identifying what computation(s) are emulated by a given dynamic system. After an introduction, we summarize key examples of dynamic systems whose computational properties have been studied. We then introduce a very broadly applicable framework that defines the computation performed by a given dynamic system in terms of maps between that system’s evolution and the evolution of an abstract computational machine. We illustrate this framework with several examples from the literature, in particular discussing why some of those examples do not fully fall within the remit of our framework. We also briefly discuss several related issues, such as uncomputability in dynamic systems, and how to quantify the ‘value of computation’ in naturally occurring computers. We conclude with a discussion of some of the promising directions for future research.

Entropy generation: Minimum inside and maximum outside

The extremum of entropy generation is evaluated for both maximum and minimum cases using a thermodynamic approach which is usually applied in engineering to design energy transduction systems. A new result in the thermodynamic analysis of the entropy generation extremum theorem is proved by the engineering approach. It follows from the proof that the entropy generation results as a maximum when it is evaluated by the exterior surroundings of the system and a minimum when it is evaluated within the system. The Bernoulli equation is analyzed as an example in order to evaluate the internal and external dissipations, in accordance with the theoretical results obtained.

Evolution

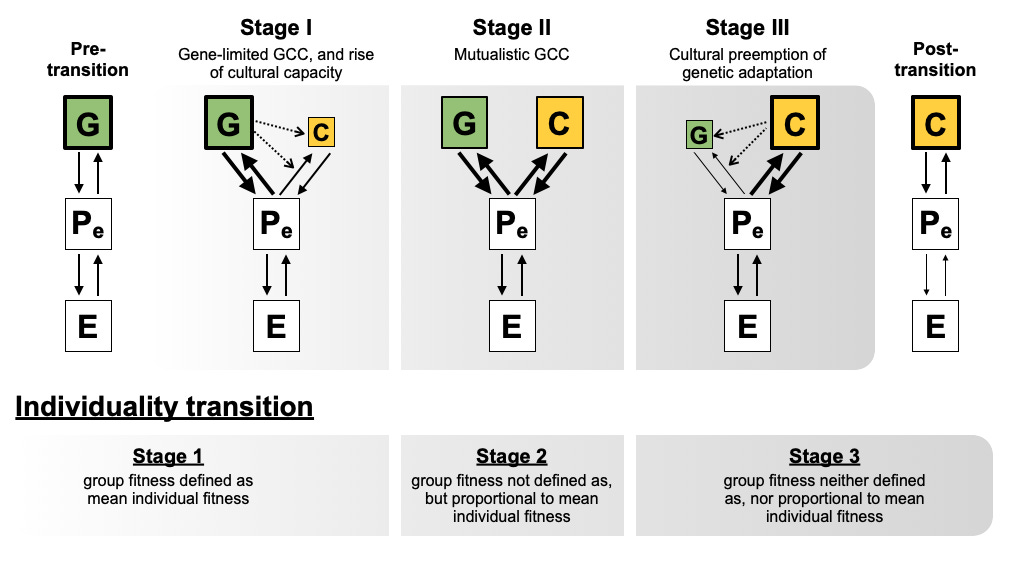

Cultural inheritance is driving a transition in human evolution

Human evolution may be shifting from a mainly genetic, individual-level process to a mainly cultural, group-level one. The motivation is a familiar puzzle: society and technology change so quickly that “human nature” can feel unstable, and cultural evolution may be the better lens for explaining that pace. The core idea is a coupled transition: cultural adaptation increasingly shapes survival and success more than genes do and an individual’s outcomes are increasingly determined by the capabilities and institutions of the groups they belong to.

Rather than claiming humans are becoming genetically “superorganisms”, the framework emphasizes inheritance (culture becoming the dominant store of adaptive information) and asks for testable predictions (e.g., rising importance of group structure and cultural inheritance over time). This is to move cultural evolution from broad metaphor toward measurable hypotheses, and to refocus debates about human behavior on how collective infrastructures, norms and technologies channel fitness and wellbeing. Read also this piece about the paper.

Previous research on a transition in human evolution has been befuddled by the complexity of adaptive culture and made little effort toward empirical tests. We resolve these problems with a novel and testable theoretical mechanism. First, we explain how a differential in adaptive capacity between genetic and cultural inheritance could drive an evolutionary transition in both inheritance and individuality (ETII). We elaborate the ETII hypothesis and show it can resolve the prior debates, such as why genetic group selection is not a necessary precondition to transition. Next, we develop quantitative metrics of evolutionary transition in both phenotype and fitness to measure movement along this transition. We evaluate available evidence and find that patterns in gene–culture coevolution lend credence to the possibility of an ongoing transition. Finally, we derive a set of testable predictions and outline an agenda for future research.

Biological Systems

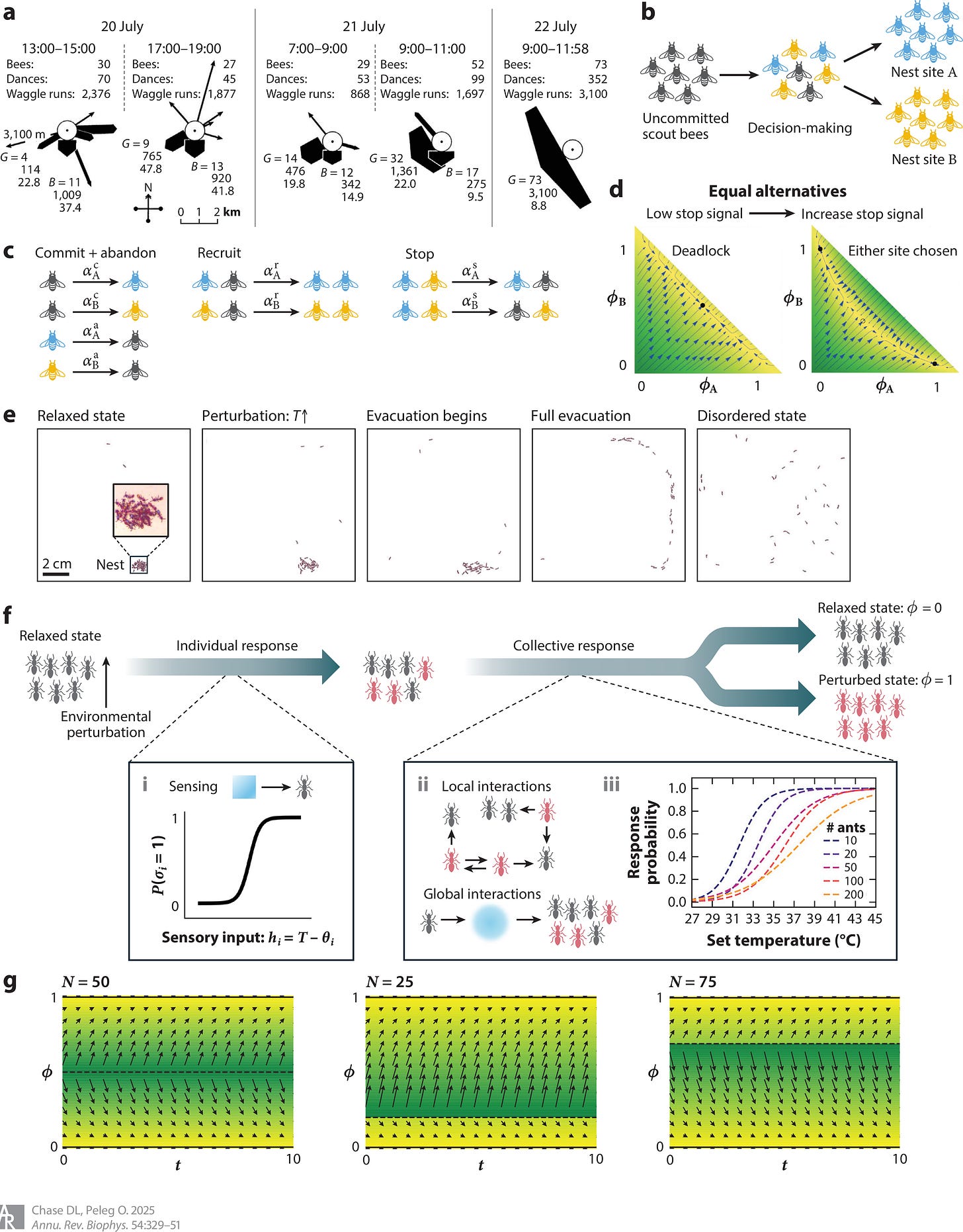

The Physics of Sensing and Decision-Making by Animal Groups

To ensure survival and reproduction, individual animals navigating the world must regularly sense their surroundings and use this information for important decision-making. The same is true for animals living in groups, where the roles of sensing, information propagation, and decision-making are distributed on the basis of individual knowledge, spatial position within the group, and more. This review highlights key examples of temporal and spatiotemporal dynamics in animal group decision-making, emphasizing strong connections between mathematical models and experimental observations. We start with models of temporal dynamics, such as reaching consensus and the time dynamics of excitation-inhibition networks. For spatiotemporal dynamics in sparse groups, we explore the propagation of information and synchronization of movement in animal groups with models of self-propelled particles, where interactions are typically parameterized by length and timescales. In dense groups, we examine crowding effects using a soft condensed matter approach, where interactions are parameterized by physical potentials and forces. While focusing on invertebrates, we also demonstrate the applicability of these results to a wide range of organisms, aiming to provide an overview of group behavior dynamics and identify new areas for exploration.

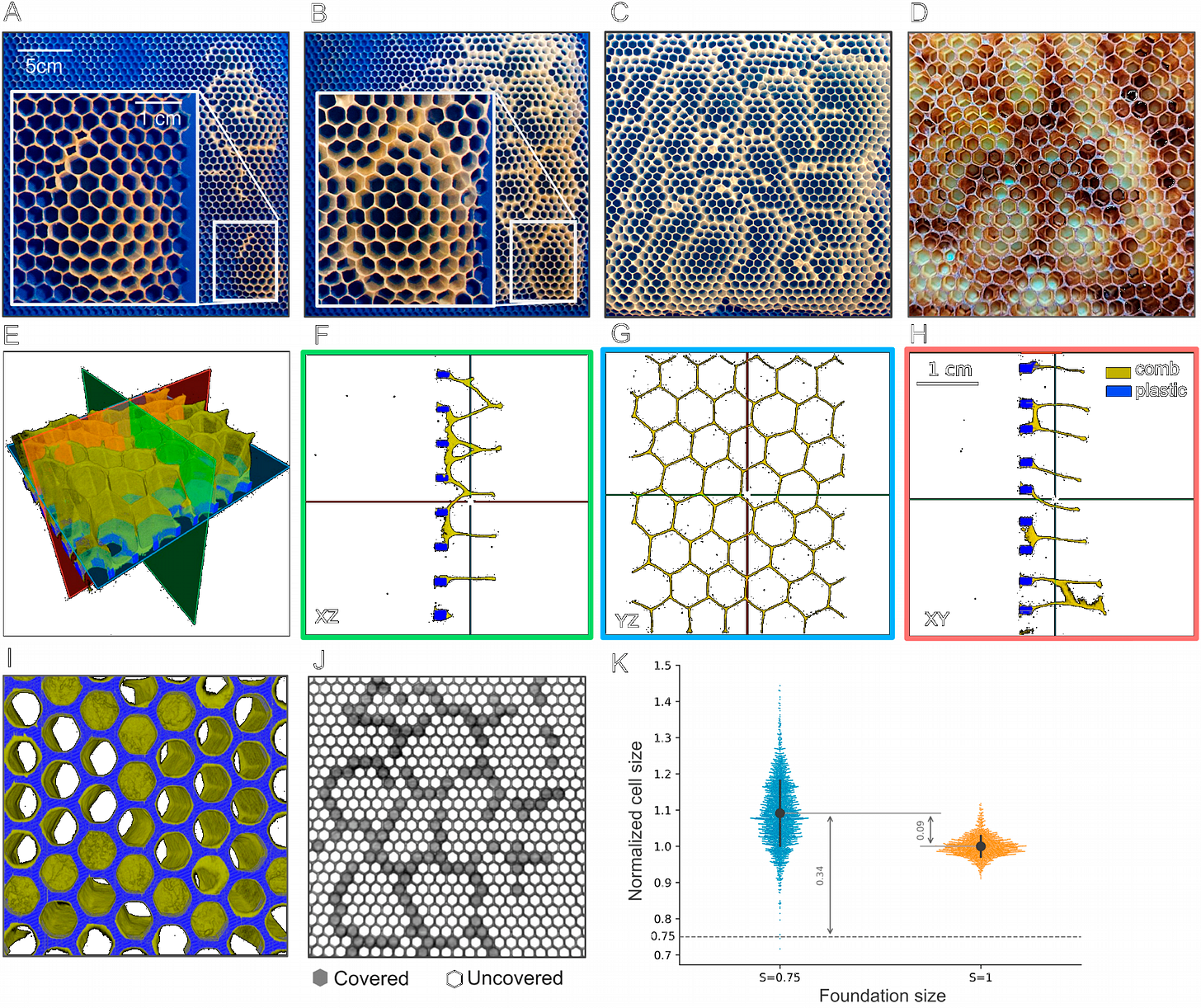

Honeybees adapt to a range of comb cell sizes by merging, tilting, and layering their construction

Honeybees are renowned for their skills in building intricate and adaptive combs that display notable variation in cell size. However, the extent of their adaptability in constructing honeycombs with varied cell sizes has not been thoroughly investigated. We use 3D-printing and X-ray microscopy to quantify honeybees’ capacity in adjusting the comb to different initial conditions. Our findings suggest three distinct comb construction modes in response to foundations with varying sizes of 3D-printed cells. For smaller foundations, bees occasionally merge adjacent cells to compensate for the reduced space. However, for larger cell sizes, the hive uses adaptive strategies such as tilting for foundations with cells up to twice the reference size and layering for cells that are three times larger than the reference cell. Our findings shed light on honeybees adaptive comb construction abilities, significant for the biology of self-organized collective behavior, as well as for bio-inspired engineered systems.

Neuroscience

As with many creative human activities, physics resists definition. Physics, as the saying goes, is what physicists do. But we can recognize major themes. One theme is the reductionist search for the building blocks of matter. In another direction is the exploration of the origins and history of the universe writ large. At intermediate scales, we explore the emergence of macroscopic behaviors that are more than the sum of their parts. An important lesson of the late 20th century is that these seemingly distinct themes are intertwined, so that theoretical physics today is more unified while at the same time reaching ambitiously across an even broader range of topics. The 2024 Nobel Prize in Physics, awarded jointly to John Hopfield and Geoffrey Hinton, celebrates the discovery of new classes of emergent phenomena, inspired by the function of brains and minds.

Interdependent Scaling Exponents in the Human Brain

We apply the phenomenological renormalization group to resting-state fMRI time series of brain activity in a large population. By recursively coarse graining the data, we compute scaling exponents for the series variance, log probability of silence, and largest covariance eigenvalue. The scaling exponents clearly exhibit linear interdependencies in the form of scaling relations and inherent variability of values closely related to the structure of correlations of brain activity. The scaling relations between the exponents are derived analytically. We find a significant correlation of exponents with clinical (gray matter volume) and behavioral (cognitive performance) traits. Akin to scaling relations near critical points in thermodynamics, our results suggest that this interdependency is intrinsic to brain organization, and may also exist in other complex systems.

BOLD signal changes can oppose oxygen metabolism across the human cortex

Using quantitative fMRI, the authors show that across large cortical territories BOLD changes can decouple from oxygen metabolism, sometimes even reversing sign, so stronger BOLD does not reliably indicate greater neural energy use. This is very important and motivates more cautious interpretation and wider use of metabolic measurements alongside BOLD.

Functional magnetic resonance imaging measures brain activity indirectly by monitoring changes in blood oxygenation levels, known as the blood-oxygenation-level-dependent (BOLD) signal, rather than directly measuring neuronal activity. This approach crucially relies on neurovascular coupling, the mechanism that links neuronal activity to changes in cerebral blood flow. However, it remains unclear whether this relationship is consistent for both positive and negative BOLD responses across the human cortex. Here we found that about 40% of voxels with significant BOLD signal changes during various tasks showed reversed oxygen metabolism, particularly in the default mode network. These ‘discordant’ voxels differed in baseline oxygen extraction fraction and regulated oxygen demand via oxygen extraction fraction changes, whereas ‘concordant’ voxels depended mainly on cerebral blood flow changes. Our findings challenge the canonical interpretation of the BOLD signal, indicating that quantitative functional magnetic resonance imaging provides a more reliable assessment of both absolute and relative changes in neuronal activity.

Neuromodulators Generate Multiple Context-Relevant Behaviors in Recurrent Neural Networks

Neuromodulators are critical controllers of neural states, with dysfunctions linked to various neuropsychiatric disorders. Although many biological aspects of neuromodulation have been studied, the computational principles underlying how neuromodulation of distributed neural populations controls brain states remain unclear. In contrast to external contextual inputs, neuromodulation can act as a single scalar signal that is broadcast to a vast population of neurons. We model the modulation of synaptic weight in a recurrent neural network model and show that neuromodulators can dramatically alter the function of a network, even when highly simplified. We find that under structural constraints like those in brains, this provides a fundamental mechanism that can increase the computational capability and flexibility of a neural network. Diffuse synaptic weight modulation enables storage of multiple memories using a common set of synapses that are able to generate diverse, even diametrically opposed, behaviors. Our findings help explain how neuromodulators unlock specific behaviors by creating task-specific hyperchannels in neural activity space and motivate more flexible, compact and capable machine learning architectures.

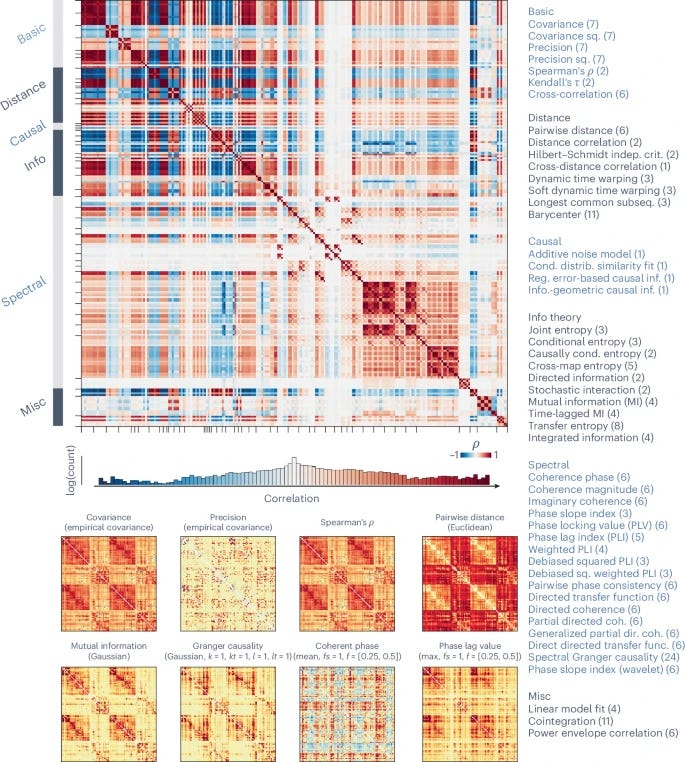

Benchmarking methods for mapping functional connectivity in the brain

The networked architecture of the brain promotes synchrony among neuronal populations. These communication patterns can be mapped using functional imaging, yielding functional connectivity (FC) networks. While most studies use Pearson’s correlations by default, numerous pairwise interaction statistics exist in the scientific literature. How does the organization of the FC matrix vary with the choice of pairwise statistic? Here we use a library of 239 pairwise statistics to benchmark canonical features of FC networks, including hub mapping, weight–distance trade-offs, structure–function coupling, correspondence with other neurophysiological networks, individual fingerprinting and brain–behavior prediction. We find substantial quantitative and qualitative variation across FC methods. Measures such as covariance, precision and distance display multiple desirable properties, including correspondence with structural connectivity and the capacity to differentiate individuals and predict individual differences in behavior. Our report highlights how FC mapping can be optimized by tailoring pairwise statistics to specific neurophysiological mechanisms and research questions.

→ Please, remind that if you find value in #ComplexityThoughts, you might consider helping it grow by subscribing, or by sharing it with friends, colleagues or on social media. See also this post to learn more about this space.